Introducing Open-TQ-Metal: Open-Source Fused Attention for Long-Context LLM Inference

Introducing Open-TQ-Metal - an open-source, Metal-native implementation of fused compressed-domain attention, extending Google Research's TurboQuant approach (Zandieh et al., ICLR 2026) to Apple Silicon. It enables Llama 3.1 70B at 128K context on a single 64GB Mac, a configuration none of the major open-source inference frameworks (mlx-lm, llama.cpp, Ollama) can reach on this hardware.

Llama 3.1 70B at 128K context needs 79 GB of memory. Normally, a 64 GB Mac can't do it. Open-TQ-Metal writes fused Metal attention kernels that compress the KV cache from 40 GB to 12.5 GB. Now 128K fits - and runs at 6 tok/s.

This is the first implementation of fused compressed-domain attention on Apple Silicon, built across 330 experiments on two model families (Gemma 4 31B and Llama 3.1 70B).

Paper: arxiv.org/abs/2604.16957

Key Contributions

- First Metal implementation of fused compressed-domain attention. Custom Metal compute shaders compute attention directly on int4-quantized KV cache data, with zero intermediate dequantization buffers. 48x speedup at 128K context over the dequantize-then-attend baseline.

- Extended the TurboQuant approach from 8B to 70B. The original TurboQuant paper tested on 8B models with 32 layers. We ran 330 experiments across Gemma 4 31B (60 layers) and Llama 3.1 70B (80 layers), identifying which techniques transfer and which break at depth.

- A cross-architecture quantization finding. A single architectural parameter (attn_scale) determines whether angular quantization methods like PolarQuant succeed or fail - a finding that was not in the original TurboQuant, PolarQuant, or QJL papers.

- Fully open-sourced. Metal shader code, C++ inference engine, and all benchmarks are public.

Overview

Standard int4 inference dequantizes the full key matrix to FP32 before computing attention - wasting bandwidth and memory on temporary matrices that grow with context length:

The fused sdpa_int4 Metal kernel reads packed int4 keys and values directly from device memory, dequantizes per-element via bitwise operations in GPU registers, and computes attention with online softmax - no intermediate matrices ever materialize.

Split-K parallelism divides long KV sequences into 512-token chunks processed in parallel across Metal's GPU cores, then reduces partial softmax results via chained MLX Primitives. This solves the Metal dispatch race condition (multiple kernels within a single eval_gpu() execute without barriers) and achieves flat latency scaling from 1K to 128K tokens.

Key Discoveries

The attn_scale Finding

The most surprising result: the attention scale factor - not model size - determines whether angular quantization methods work.

PolarQuant encodes KV vectors as recursive polar coordinates. It works beautifully on Llama (attn_scale = 1/√d = 0.0884) but produces gibberish on Gemma 4 (attn_scale = 1.0). The reason: Gemma's undampened scale amplifies a 4% directional error 25-100x more per layer. Across 60 layers, this compounds through softmax into incoherent output.

| Method | Llama 70B (α = 0.0884) | Gemma 31B (α = 1.0) |

|---|---|---|

| PolarQuant 4-bit cosine sim | 0.958 | 0.621 |

| PolarQuant 4-bit output | Coherent | Gibberish |

| Int4 (group=32) cosine sim | 0.998 | 0.992 |

| Int4 (group=32) output | Identical | Excellent |

Int4 per-group quantization works on both architectures because its per-element affine errors are uncorrelated and cancel in dot products - unlike PolarQuant's structured angular bias.

Wired Memory Is Mandatory

A 39 GB model on 64 GB hardware causes macOS to page weights to SSD. One line fixes a 12x slowdown: mx::set_wired_limit(max_working_set_size) → 0.6 → 6.0 tok/s.

Results

Kernel Speedup (Llama 3.1 70B)

| KV Length | Fused Kernel | Baseline (deq + SDPA) | Speedup |

|---|---|---|---|

| 1K | 1.6 ms | 4.6 ms | 3x |

| 16K | 2.7 ms | 54.6 ms | 20x |

| 64K | 5.7 ms | 225.3 ms | 39x |

| 128K | 9.9 ms | 480.6 ms | 48x |

Constant Throughput (Gemma 4 31B)

The fused kernel maintains constant 10 tok/s regardless of context length. The baseline degrades from 10.8 to 7.2 tok/s as dequantization bandwidth increases.

Memory: 128K Context on 64 GB

| Context | FP16 KV | int4 KV | Total (int4) | Fits 64 GB? |

|---|---|---|---|---|

| 16K | 5.0 GB | 1.6 GB | 40.7 GB | Yes |

| 64K | 20.0 GB | 6.3 GB | 45.4 GB | Yes |

| 128K | 40.0 GB | 12.5 GB | 51.6 GB | Yes |

| 236K | – | 23.4 GB | 62.5 GB | Yes (max) |

End-to-End

| Framework | tok/s | Max Context (64 GB) | 128K? |

|---|---|---|---|

| Open-TQ-Metal | 6.0 | 236K | Yes |

| mlx-lm | 7.3 | 73K | No |

| llama.cpp | ~5 | ~50K | No |

| Ollama | ~5 | ~40K | No |

Open-TQ-Metal trades 18% throughput for 3.2x context capacity - the only framework that runs Llama 70B at 128K on consumer hardware.

Memory breakdown at each context length. The dashed red line is the 64 GB limit. At 128K, FP16 KV needs 79.1 GB (overflows). Open-TQ-Metal fits in 51.6 GB.

What Didn't Work (and Why)

| Approach | Result | Why |

|---|---|---|

| PolarQuant (Gemma 4) | Gibberish | attn_scale=1.0 amplifies angular error |

| QJL 1-bit (both) | Repetition loops | 0.8580 ≈ 0 compound noise |

| QJL + int4 hybrid | 1.7% savings | Not worth the quality risk |

| Speculative decode (E2B→31B) | 12 tok/s (slower) | 25% acceptance rate (need >60%) |

| int4 KV >950 tok (Gemma) | Quality degrades | Compound error at α=1.0 |

| int8 KV >1K tok (Gemma) | Same failure | Same fundamental limit |

| 2-bit weights | 0.67x slower | MLX 2-bit kernel too slow |

| FP16 intermediates | Slower | Cast overhead |

| async_eval pipelining | Neutral | Mutable KV cache prevents overlap |

Paper Comparison

The original TurboQuant paper (ICLR 2026) proposes PolarQuant + QJL on CUDA for 8B models. Open-TQ-Metal ports it to Metal, scales to 70B, and discovers that int4 with fused attention outperforms the paper's pipeline on real hardware.

| TurboQuant Paper (ICLR 2026, A100, 8B) |

Our Open-TQ-Metal Implementation (M1 Max, 70B) |

|

|---|---|---|

| Compression Method | QJL + PolarQuant 2.5-bit, near-lossless |

int4 asymmetric 3.2x compression |

| Fused Kernel | CUDA, standard sizes | Metal, split-K 48x speedup at 128K |

| KV at 128K (70B) | Not tested at 70B | 12.5 GB (fits 64GB with 11.5GB headroom) |

| QJL 1-bit keys | Works on 8B (32 layers) | Fails on 70B (80 layers) error compounds |

| PolarQuant | Works (4.2x compression) | Works (cosine 0.989) but slower than int4 |

| Model Scale | 7-8B parameter models | 70B parameters first Apple Silicon port |

| Speed | Not reported for 70B on Apple Silicon |

6.0 tok/s decode (mlx-lm: 7.3 tok/s) |

| Quality | Near-lossless (0.997 recall) |

Coherent text 200+ tokens matches mlx-lm top-1 |

Run It Yourself

The inference engines are in separate repos, each with full build instructions, pretrained weight loaders, and streaming chat interfaces:

| Repo | Model | Speed | Context | Hardware |

|---|---|---|---|---|

| gemma4metal | Gemma 4 31B | 10 tok/s (fused) / 59 tok/s (MoE) | 950 tok | M1/M2/M3/M4, 32 GB+ |

| turboquant-llama3.170B | Llama 3.1 70B | 6 tok/s | 128K tok | M1 Max+, 64 GB |

Both are C++ and Metal with Python only for tokenization. Clone, build MLX from source, cmake && make, and chat.

How We Discovered These Breakthroughs: The Ensue Agent Swarm

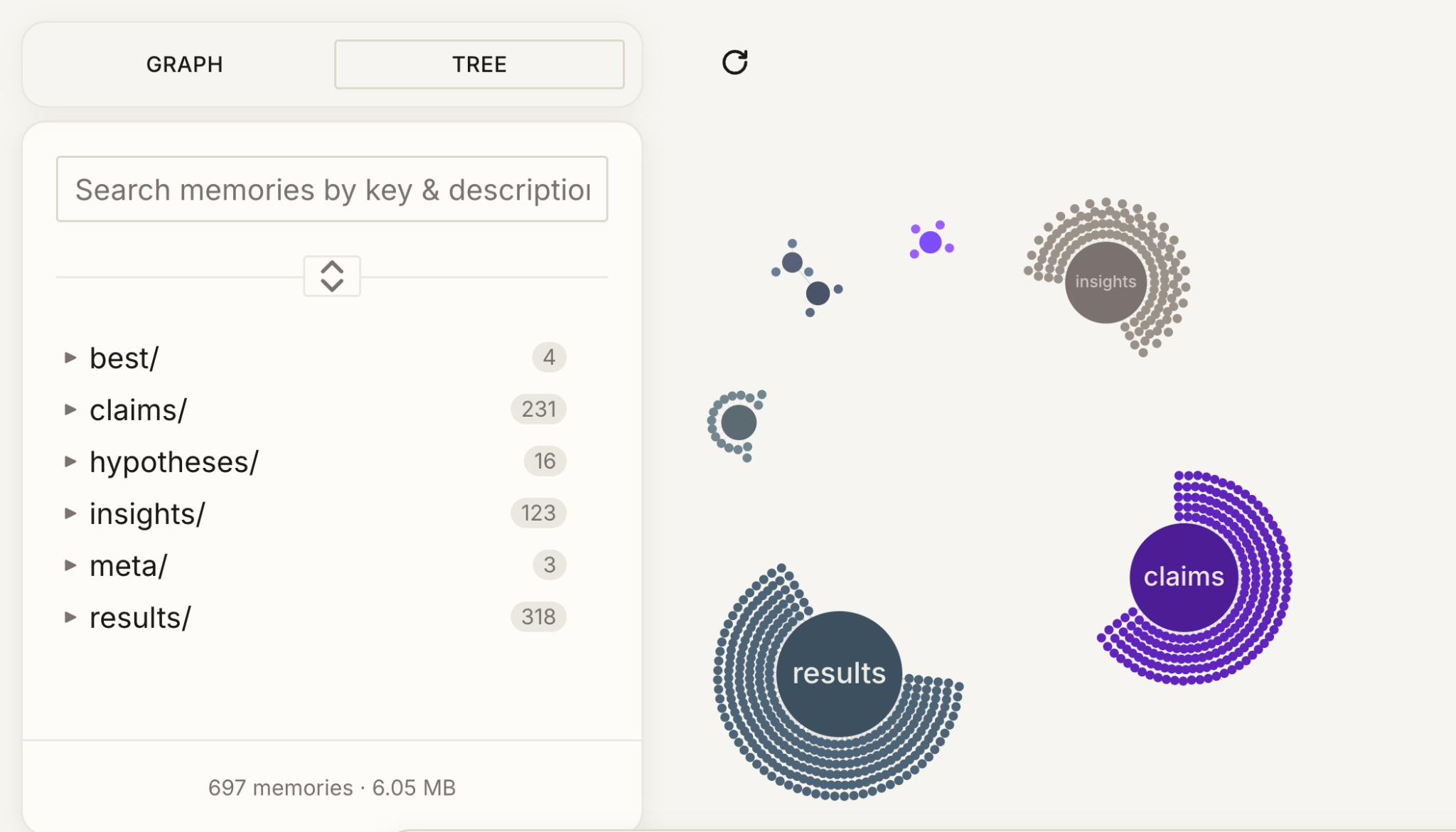

The 330-experiment sweep across Gemma 4 31B and Llama 3.1 70B was done with our agent swarm and coordinated over a shared memory layer, Ensue.

A swarm of orchestrator agents worked in parallel conducting sub agents on separate Macs. Each relied on custom retrieval systems sifting through memories under one data hierarchy. The memories were indexed through insights, hypotheses, task delegation, and reasoning. The aim was to asynchronously conduct an efficient sweep of the optimization frontier with diverse, creative experiments.

Our sub agents were categorized under hardware profiler, data diagnostician, model builder, experiment runner, plateau analyst, validator, and reflector. Each provided separate reasoning traces with complementary agentic loops that enhanced the capability of the overall swarm. The cross-experiment synthesis that connects findings across architectures, parameter sweeps, and differing philosophies culminates to an enriched collective intelligence.

Optimizing ML Systems

The kind of work that produced Open-TQ-Metal kernel-level optimization, cross-architecture analysis, and agent-coordinated experiment sweeps is the kind of ML systems work we do for clients.

Check out our previous work on how we compressed months of ML work to 48 hours and fixed LLM inference slowdown and other case studies.

If you're hitting memory walls, scaling a paper's approach to your stack, or trying to ship inference optimizations, book a call or contact us.